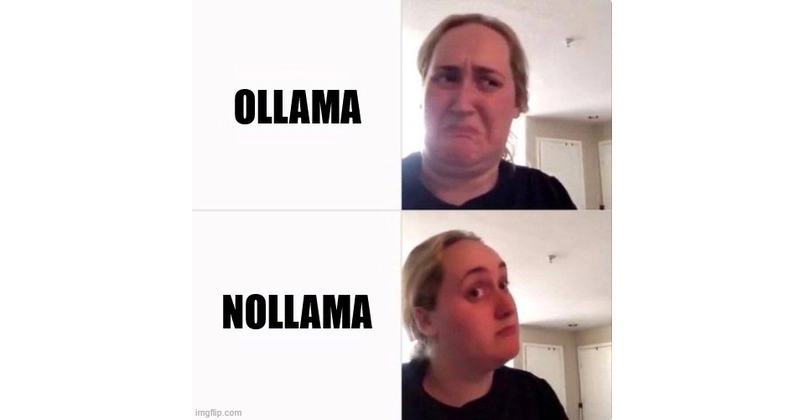

Your Intel Laptop Runs LLMs Now—No NVIDIA Needed [Benchmarks]

Everyone thought LLMs demanded NVIDIA GPUs or cloud servers. NoLlama flips the script—your Intel laptop's NPU just became a beast for local AI, streaming chat and vision models effortlessly.

⚡ Key Takeaways

- NoLlama enables smoothly LLMs on Intel NPU, iGPU, discrete GPU, and CPU—no config needed. 𝕏

- Auto-detects hardware, supports OpenAI/Ollama APIs, streaming chat, and vision models locally. 𝕏

- Perfect for sensitive data (GDPR, medical, legal)—zero cloud leakage, audit-proof. 𝕏

- Benchmarks: NPU ~5 tok/s on 8B, iGPU 15-20 tok/s VLMs; efficiency trumps raw speed. 𝕏

- Predicts NPU shift like smartphone ARM revolution—edge AI goes mainstream by 2026. 𝕏

Worth sharing?

Get the best Open Source stories of the week in your inbox — no noise, no spam.

Originally reported by Dev.to