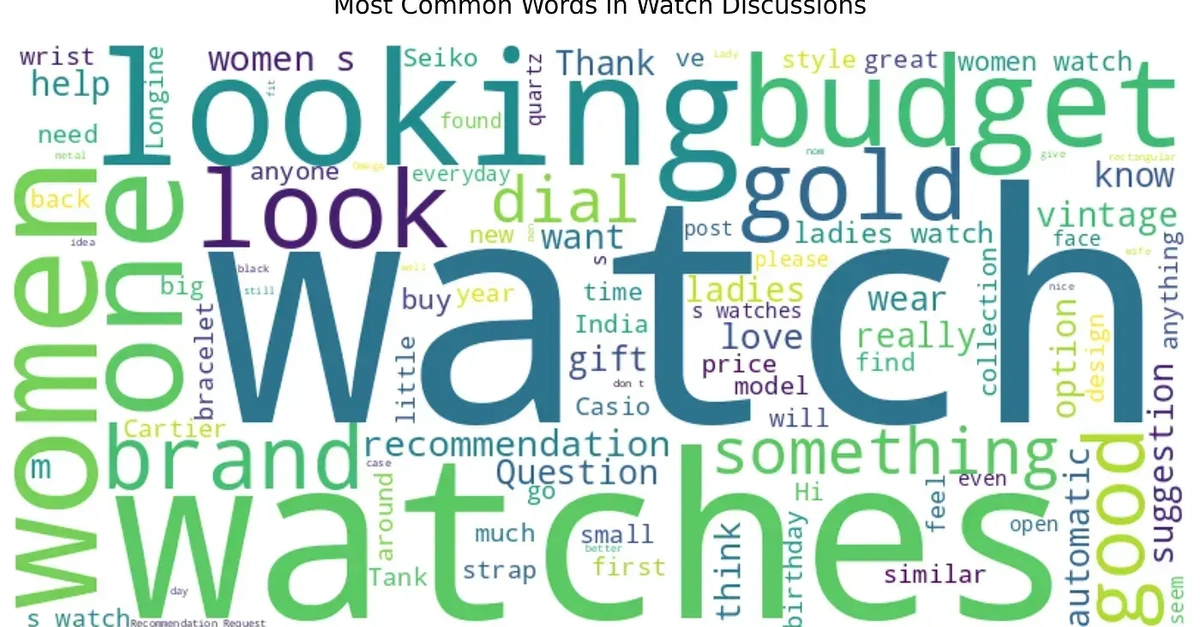

Did you know that 90% of online watch discussions are dominated by men? It’s a staggering number that leaves a massive blind spot for anyone trying to understand the other half of the market. But what if you could peel back that layer and actually hear what women are saying about the timepieces they love? That’s precisely the ambitious goal behind a recent project that scraped Reddit, applied some serious Natural Language Processing (NLP) muscle, and unearthed some fascinating, and frankly, long-overdue insights into women’s watch preferences.

This isn’t just some academic exercise; it’s a practical demonstration of how open-source tools can cut through the digital cacophony to deliver actionable intelligence. The pipeline, built entirely with standard Python libraries like requests, pandas, nltk, and scikit-learn, shows a clear path from raw, unfiltered internet chatter to meaningful data visualizations. It’s like building a custom telescope to zoom in on a previously unseen galaxy of consumer desires.

Bypassing the Gates: Scraping Without API Keys

The initial hurdle for many data projects is access. Official APIs can be restrictive, requiring keys, approvals, and often come with rate limits that choke off larger-scale endeavors. This project sidestepped that entirely by directly hitting Reddit’s public JSON endpoints. Think of it as finding a secret back door instead of waiting in a mile-long line.

This method, detailed in reddit_json_scraper.py, involves constructing specific search URLs that cast a wide net across relevant subreddits. The raw JSON blobs are then wrangled into a more digestible format using a helper function, extract_post_data. This function flattens the nested Reddit structure, pulling out exactly what’s needed: post IDs, subreddit names, titles, text bodies, scores, comment counts, and timestamps. The result? A clean, structured dataset that’s ready for deeper analysis.

The core collection loop orchestrates this, iterating through URLs, fetching JSON, and amassing a growing list of posts. Crucially, it doesn’t stop at just the initial post. It also dives into comments for the most engaging threads—those with the highest combined score and comment count—by again hitting post-specific JSON endpoints. This ensures that the nuances and follow-up conversations are captured, painting a much richer picture.

Cutting Through the Noise: Filtering for Relevance

Now, here’s where it gets tricky. Reddit is a firehose, and not every mention of “women” or “watch” is relevant. The project recognized this inherent noise and implemented a smart filtering layer in filter_posts.py. The goal was to distinguish genuine discussions about women’s watches from men asking for themselves or offering advice directed at male audiences.

This was achieved with a clever regex filter. It flags posts containing phrases like “as a man” or “for men,” effectively neutralizing them. But it keeps posts that clearly indicate a focus on women, such as “gift for my wife” or “buying for her.” The NON_FILTER_PATTERNS variable, as defined, captures this intent beautifully, ensuring that the subsequent analysis is focused on the intended demographic.

We flag posts that contain phrases like “as a man” or “for men”: … but we keep posts that clearly talk about buying for a woman, e.g. “gift for my wife”: NON_FILTER_PATTERNS=r”(for|gift|buying|getting|choosing|help).*(mum|mom|mother|wife|girlfriend|partner|daughter|sister|woman|female|her|she)”

The filter_check function combines the title and body text, applies these patterns, and outputs a filtered_posts.csv. This cleaned dataset becomes the bedrock for all the NLP magic that follows.

Unpacking Preferences: NLP Takes the Stage

With the data prepped, the real analysis kicks off in watch_analyzer.py. The heart of this module is a single class designed to process the filtered posts and comments. It consolidates all text, sets up NLP tools like NLTK and VADER for sentiment analysis, and then gets to work.

After cleaning URLs and standardizing whitespace, a combined_text column is generated for each post. VADER, a lexicon and rule-based sentiment analysis tool, is then applied. It churns out sentiment scores, specifically a sentiment_compound score, which is then categorized into ‘positive’, ‘neutral’, or ‘negative’ labels. This sentiment analysis is performed on both posts and comments, offering a dual perspective on the discussions. The resulting sentiment distribution is then visualized, giving an immediate feel for the overall tone of conversations surrounding women’s watches.

Beyond Sentiment: Brands, Styles, and Price Points

But sentiment is just one piece of the puzzle. The project dive into more tangible aspects of watch preferences. It meticulously counts mentions of specific brands, drawing from a curated list that spans from accessible labels like Casio and Seiko to luxury titans like Rolex and Patek Philippe. This brand-spotting provides a clear hierarchy of what’s being discussed and desired.

Price is another critical dimension. Using regex patterns tailored for Indian currency symbols (₹, rs, inr) and keywords like “rupees,