It turns out, 47% of developers report difficulty in testing complex backend logic. That’s a startling figure, and one that hints at a widespread problem in how we architect our systems. Often, the culprit isn’t a lack of skill, but a lack of a truly modular design. This is precisely where the Pipeline Pattern shines, offering a cleaner, more maintainable way to build Go applications.

Think about the last time you wrestled with a sprawling Go function, trying to isolate a single piece of functionality for a unit test. Frustrating, right? It’s a common scene, and it’s largely a symptom of tightly coupled code, where one stage of an operation is intrinsically bound to the next.

The Tangled Mess: Life Without Pipelines

Consider a typical backend job, like scraping job listings from various sites. The process usually involves several distinct steps: pulling raw data, cleaning it up (normalization), assigning relevance scores, and finally, storing it in a database. In a traditional, ‘monolithic’ approach, all these steps might be crammed into a single loop.

for _, raw := range rawJobs {

// normalize

raw.Title = strings.TrimSpace(raw.Title)

raw.Location = strings.ReplaceAll(raw.Location, "NYC", "New York")

// score

score := 0

for _, keyword := range keywords {

if strings.Contains(raw.Title, keyword) {

score++

}

}

// save

s.Repo.Create(raw.Title, raw.Location, score)

}

This might seem straightforward on the surface, but it’s a breeding ground for problems. Stage visibility? Forget it. You have to read the entire block to grasp where normalization ends and scoring begins. Testing becomes an exercise in futility – you can’t easily test the scoring logic in isolation; it’s inextricably linked to the normalization and saving processes. Modifying a single rule, say, adding a new scoring criterion, means gingerly probing a complex, interconnected structure, risking unintended side effects elsewhere. And reusing that normalization logic? That’s copy-paste territory, leading to code duplication and maintenance headaches. Concurrency, the holy grail of modern backend systems, feels like an alien concept when everything is so deeply tangled.

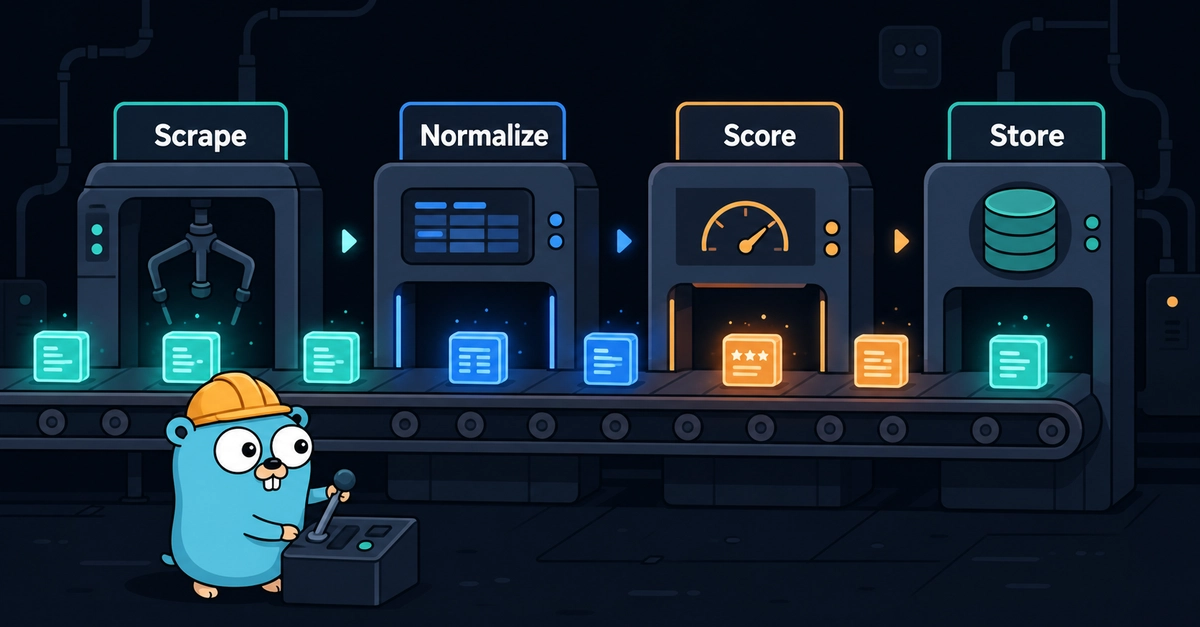

Enter the Pipeline: Explicit Stages, Clear Flow

The core idea behind the Pipeline Pattern is deceptively simple: break down a complex process into a series of discrete, independent stages, with data flowing sequentially from one to the next. Instead of one giant function trying to do everything, you have Scrape → Normalize → Score → Store. Each stage is a self-contained unit, responsible for one specific task.

The benefits here are immediate and profound. Readability skyrockets. The structure itself dictates the flow of operations. Testing becomes a breeze – you can spin up individual stages with mock data and verify their behavior in isolation. Modifying or extending the pipeline is as simple as swapping out or adding new stages, without disturbing the existing components. Code reuse? It’s baked in. Want to reuse the normalization logic elsewhere? Just import the module. And concurrency? It becomes far more manageable, as you can more easily identify independent stages that can be run in parallel.

Wiring It Up: Flexibility Through Interfaces

The magic truly happens when these stages are wired together using interfaces. This is the secret sauce that allows for the extraordinary flexibility the Pipeline Pattern offers. In the example from the job scraper, notice how the Pipeline struct accepts dependencies like scorer and jobService through interfaces in its constructor (NewPipeline).

type Pipeline struct {

scorer scoring.Scorer

jobService JobService

companyService CompanyService

logger *slog.Logger

}

func NewPipeline(

scorer scoring.Scorer,

jobService JobService,

companyService CompanyService,

logger *slog.Logger,

) *Pipeline {

return &Pipeline{

scorer: scorer,

jobService: jobService,

companyService: companyService,

logger: logger,

}

}

This dependency injection means the Pipeline itself doesn’t care how the scoring is done or where the jobs are saved, only that it receives an object that conforms to the expected interface. The Run() method then orchestrates the flow:

func (p *Pipeline) Run(ctx context.Context, scraper Scraper) error {

// 1. Scrape

rawJobs, err := scraper.Scrape(ctx)

if err != nil {

return fmt.Errorf("scraping %s: %w", scraper.Source(), err)

}

for _, rawJob := range rawJobs {

// 2. Normalize

normalizedJob, err := normalize.Normalize(rawJob)

if err != nil {

failed++

continue

}

// 3. Score

job.Score = p.scorer.Score(job)

// 4. Save

if err := p.jobService.Save(ctx, job); err != nil {

failed++

continue

}

saved++

}

return nil

}

This clean separation allows you to swap out entire components without altering the core pipeline logic. Need a different scraper for a new job board? Inject a new Scraper implementation. Want to test with an in-memory database instead of PostgreSQL? Pass in an InMemoryStore implementation. Considering a machine learning model for scoring? Simply provide a Scorer that implements that logic.

A Historical Echo: The Assembly Line Revolution

This modularity isn’t just a neat trick; it echoes a fundamental shift in industrial production. Henry Ford’s assembly line revolutionized manufacturing by breaking down the complex task of building a car into a series of simple, repeatable steps performed by specialized workers or machines. Each station performed one job, and the product moved linearly from one to the next. The result? Unprecedented efficiency, standardization, and scalability. The Pipeline Pattern applies this same principle to software development, transforming monolithic, hard-to-manage codebases into elegant, efficient, and highly adaptable systems.

The Bottom Line: Why This Matters for Developers

For developers, embracing the Pipeline Pattern means writing code that is not only more functional but also more enjoyable to work with. It leads to systems that are easier to understand, debug, and extend. The 47% statistic I mentioned earlier? By adopting this pattern, we can realistically aim to significantly reduce that number, making the development process smoother and the resulting software more reliable. It’s a clear win for maintainability, testability, and ultimately, for the speed at which we can innovate.

🧬 Related Insights

- Read more: CNCF Hands Kusari Keys to Secure Cloud-Native Supply Chains—for Free

- Read more: Gallery-dl’s GitHub Exit: DMCA Hits Open-Source Scraper, Codeberg Beckons

Frequently Asked Questions

What does the Pipeline Pattern do in Go?

It structures Go applications by breaking down complex processes into a series of independent, sequential stages, allowing for modularity, testability, and flexibility.

Is this pattern suitable for all Go projects?

It’s particularly effective for data processing, ETL (Extract, Transform, Load), asynchronous task execution, and any workflow involving multiple discrete steps where modularity is beneficial.

How does this improve testing?

By isolating each stage of the process, you can write unit tests for individual components without needing to set up the entire system, making testing faster and more targeted.