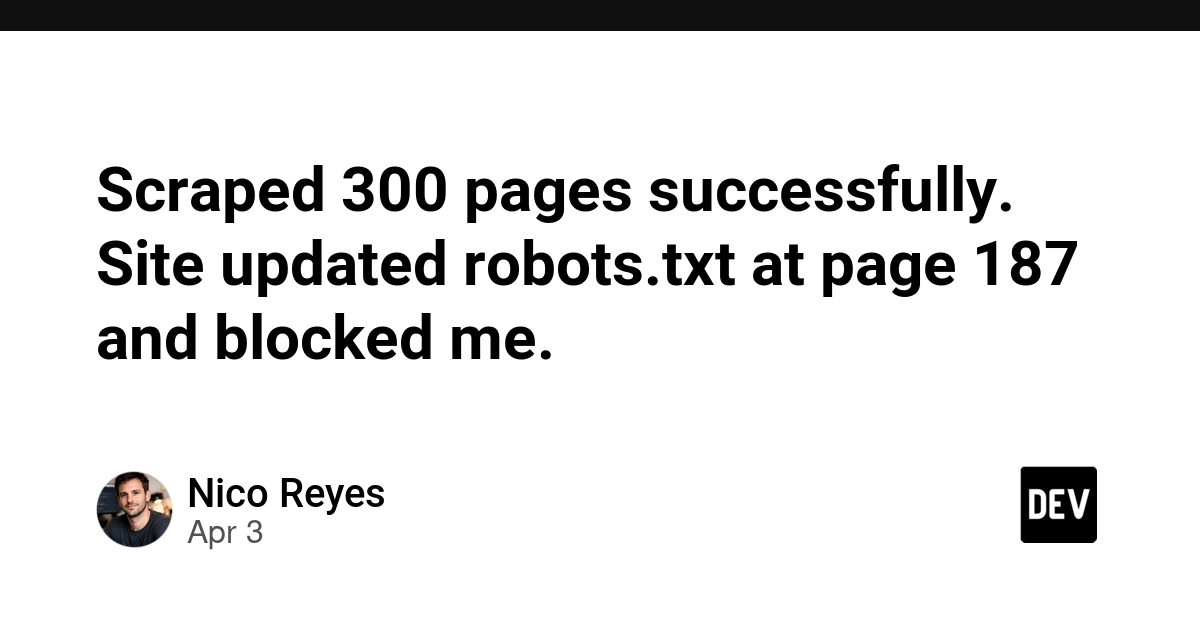

The Mid-Scrape Trap: Why Checking robots.txt Once Costs You IP Bans

A developer scraped 187 pages successfully, then hit a wall—the site updated robots.txt while the scraper ran. One lesson learned the hard way: checking robots.txt once isn't enough.

⚡ Key Takeaways

- Sites update robots.txt dynamically mid-scrape; checking only at startup leaves you vulnerable to IP bans 𝕏

- Smaller ecommerce platforms change robots.txt reactively when traffic spikes, often mid-overnight scraping jobs 𝕏

- Refreshing robots.txt every 5 minutes catches changes before your scraper violates new rules and triggers a ban 𝕏

Worth sharing?

Get the best Open Source stories of the week in your inbox — no noise, no spam.

Originally reported by Dev.to