90% Token Slash: One Dev's Markdown Second Brain Built on Claude Code

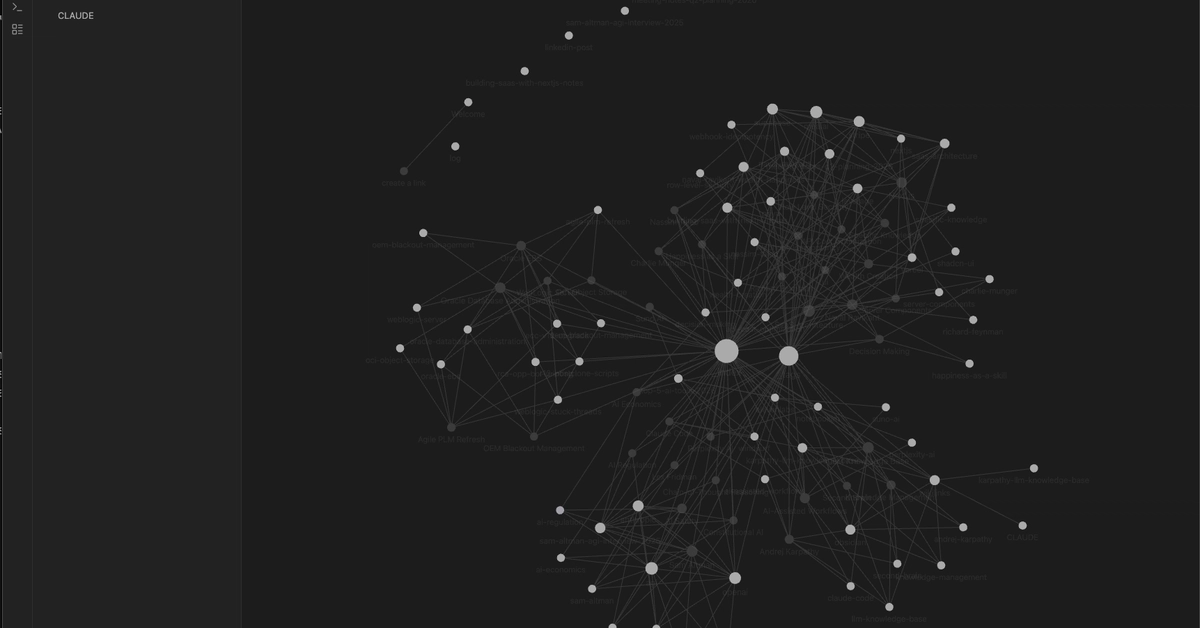

Drop 50 raw files into a folder. Claude spins them into 44 interconnected wiki pages — slashing LLM tokens by 90%. No fancy databases required.

⚡ Key Takeaways

Worth sharing?

Get the best Open Source stories of the week in your inbox — no noise, no spam.

Originally reported by Dev.to