How One Developer Built a Framework to Stop AI Agents From Forgetting Everything

Claude forgets. Gemini costs money. So one engineer built a system to give AI agents real memory, task discipline, and the ability to coordinate with each other—and it's already shipping real products.

⚡ Key Takeaways

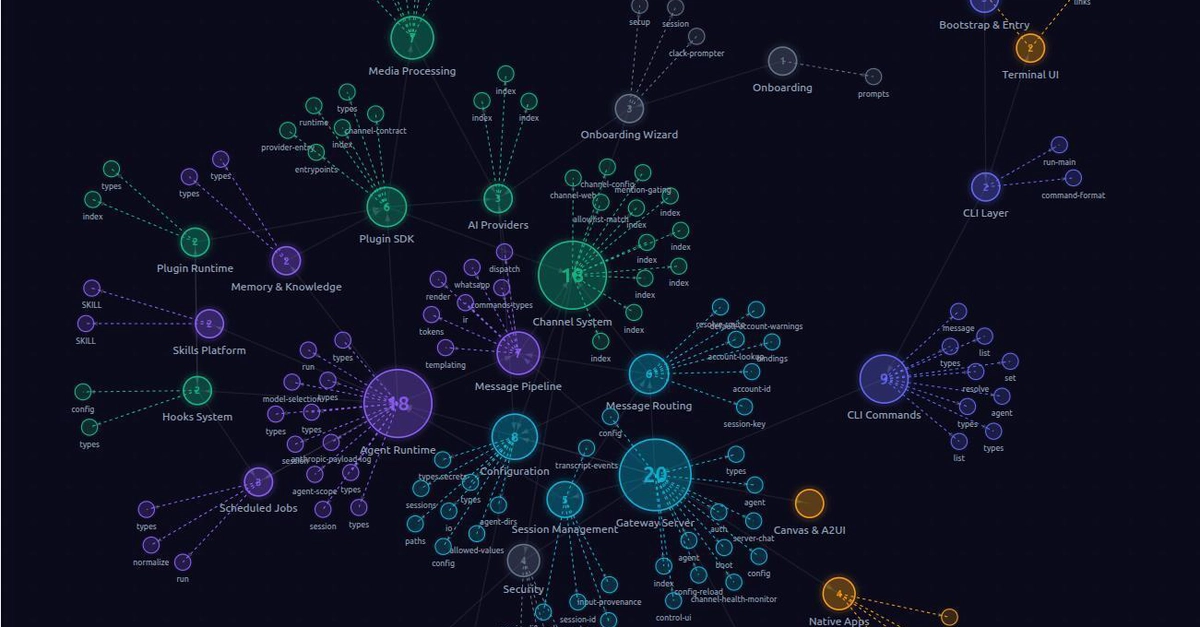

- AI agents don't fail because models are dumb—they fail because context management is hard. Persistent memory via markdown files and task tracking beats hoping for larger context windows. 𝕏

- Structural enforcement beats self-regulation. You can't trust an LLM to follow rules with human certainty. Build guardrails into the execution environment itself. 𝕏

- Real agents need three things: historical awareness (what happened before), architectural understanding (what's connected to what), and task discipline (every action traces back). Without all three, the agent drifts or breaks things. 𝕏

- The winning framework won't be the most impressive in a demo—it'll be the one that solved boring, practical problems like multi-agent coordination and persistent memory. This one already ships real products. 𝕏

Worth sharing?

Get the best Open Source stories of the week in your inbox — no noise, no spam.

Originally reported by Dev.to