🤖 Large Language Models

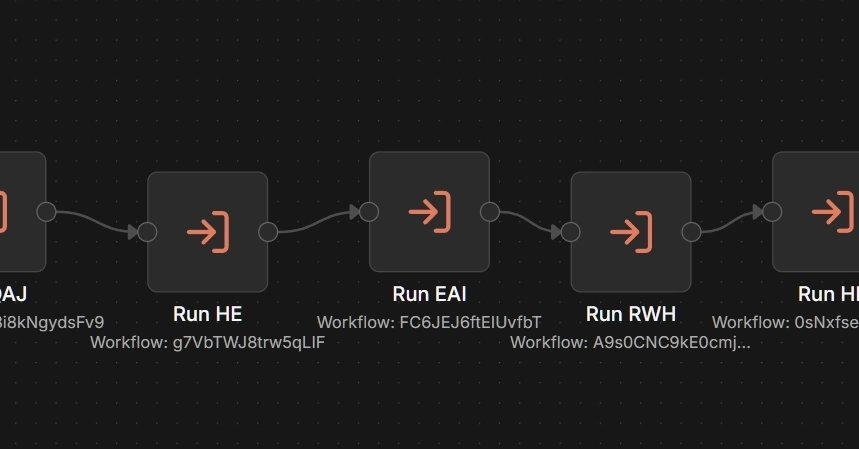

One Dev's $0 AI Pipeline: n8n + Ollama Delivers Six Blog Drafts in 13 Minutes

Tokens add up fast across multiple blogs — until you go local. This n8n-Ollama setup cranks out six drafts in under 15 minutes, zero API costs.

theAIcatchup

Apr 08, 2026

3 min read

⚡ Key Takeaways

-

Local Ollama + n8n beats API costs for multi-blog drafting, delivering six WordPress drafts in 13 minutes.

𝕏

-

Simple separators trump JSON parsing; mid-range GPUs suffice for draft-quality output.

𝕏

-

Boring models and JS scoring ensure reliability — human edits handle polish.

𝕏

The 60-Second TL;DR

- Local Ollama + n8n beats API costs for multi-blog drafting, delivering six WordPress drafts in 13 minutes.

- Simple separators trump JSON parsing; mid-range GPUs suffice for draft-quality output.

- Boring models and JS scoring ensure reliability — human edits handle polish.

Published by

theAIcatchup

Community-driven. Code-first.

Worth sharing?

Get the best Open Source stories of the week in your inbox — no noise, no spam.