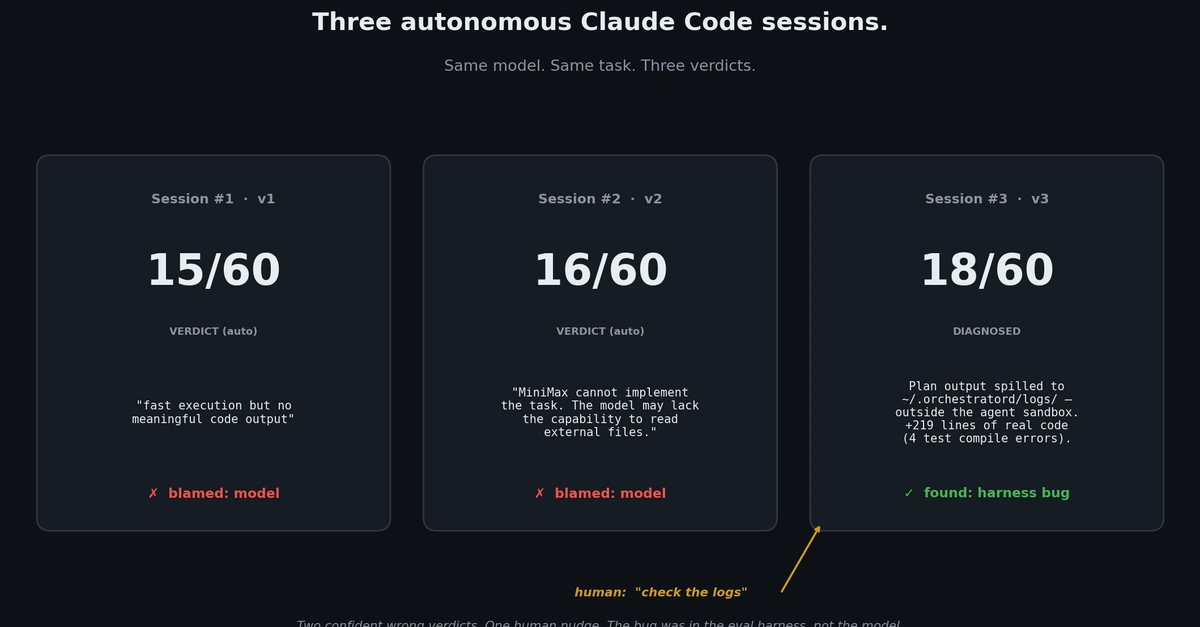

Eval Agent's Double Whiff: Sandbox Bug Fooled LLM Judge

Two confident verdicts. Zero real insight. A postmortem reveals how a sneaky sandbox config turned an LLM judge into a liar, quietly undermining agent evals everywhere.

⚡ Key Takeaways

Worth sharing?

Get the best Open Source stories of the week in your inbox — no noise, no spam.

Originally reported by Dev.to